All Over an Unknown World flickr photo by Mantissa.ca shared under a Creative Commons (BY-NC-SA) license

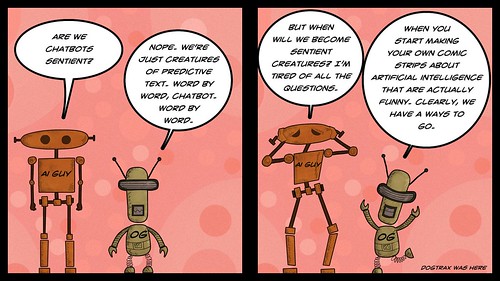

Ever since ChatGPT hit the scene, I’ve been skirting this strange dilemma in my mind as a teacher in terms of how to talk about it all in my sixth grade classroom. My students are too young to be trying ChatGPT, for sure, and yet, it seems foolish to put my head in the sand and think none of them are skirting the age issue (it’s 18, I believe) to play around in it. I am certain some are. And if they are, then they need information and guidance.

But then I think, if any of them have NOT yet heard of it, maybe that’s a good thing for now, to keep the 11 year olds of the world a little more ignorant of the AI earthquake that has hit society.

But then I think, don’t I owe it to them, as a trusted adult, to lead a discussion about what ChatGPT is and how it works and the ethical dilemmas that learners and educators all over are grappling with around plagiarism and more? I have been the one to always talk about technology and social media with them. Why not now?

Now add in how to best help families navigate this new world with their children at home.

Sigh.

I’ve decided that I need to talk about it, thanks to my explorations with ETMOOC, and the Day of AI, which takes place tomorrow, gave me some lesson ideas and tools to share with students that will explain how AI works, how ChatGPT works, as well as exploring some of the ethics of AI. I intend to provide them with two relatively safe AI tools to play with to get a feel for it . One is a school-friendly Chatbot site and the other is a scribble-digital art site (Byte Chat AI and Scribble Diffusion).

I am also working on an email letter home to families to let them know of our inquiry and to provide them with some resources about how to talk with their children about the rise of AI chatbots and open lines of discussion about use, or not use.

Lord, I hope this is the right move.

I think it is, and I am confident in the ways I can guide our discussions and inquiry in the classroom, even at this young age. I guess I don’t think ignorance or denial is the way to go, on my part. They need the tools to navigate, and who better than a human teacher to guide them forward?

Peace (and Questions),

Kevin