If you and I were to sit in a room together — say, over a cup of coffee — I bet we could collaborate on something interesting. A story. Or a short video. Or an image. A play. A comic. Maybe even a podcast. We’d bounce ideas off each other and emerge with something interesting, if only a story to tell about what we did. We’d make something to represent our relationship as friends and colleagues and collaborators.

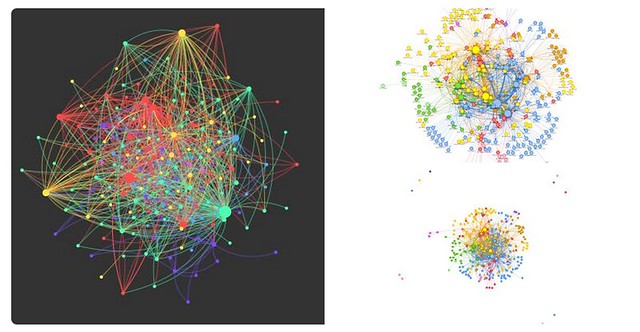

Online communities, if nurtured, can facilitate that impulse to make things, too, and it is truly one of the main elements that has me diving into so many adventures like the current Rhizomatic Learning experience. The collaboration element is the draw for me, as it was with Connected Courses, and as it was with last year’s version of Rhizomatic Learning, and as it has been for DS106, and as it has been for the last two years of the Making Learning Connected MOOC.

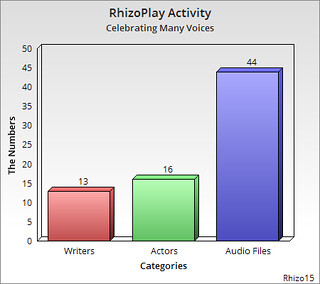

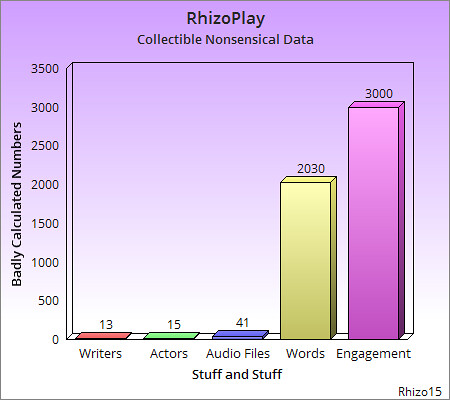

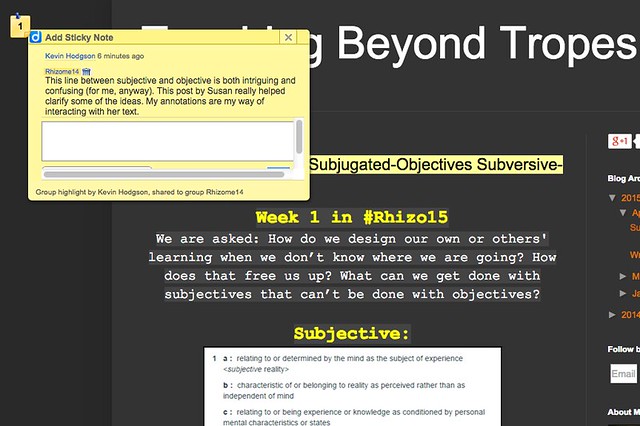

So, when Tania started to write a play about the subjective learning/objective experience for the first week of Rhizomatic Learning, and someone suggested her play be put into Google Docs, and then other writers (such as myself) began adding to the play, and then someone suggested this whole piece of collaborative writing be turned into a podcast radio play and then someone said maybe I could coordinate it .. I said, sure, I can do that.

Collaboration is nurtured by the idea of people stepping up and saying, Sure, I can do that. Even if you are not quite aware of what you are taking on. Even in online communities, where your only interaction is often connected with a tweet, or a status update, or a blog post. If you let it happen, you quickly can build up trust among participants. And that trust allows you to take chances on something unknown. It opens the path for collaboration.

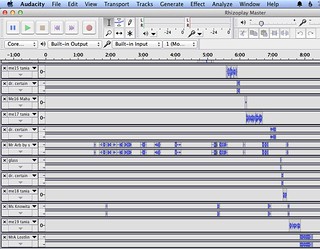

True, the complexities of online collaboration can suddenly loom quite large in this kind of undertaking where you find yourself both alone with your computing device and among many collaborators. Thankfully, I was on April break from school this week, and in between family time and other commitments, I was working on pulling together dozens of audio files as people took on parts, recorded their words, and shared them online. I would then grab the audio, pull it into Audacity and try to line things up in a way that would be coherent, and weave a tapestry of voices. I was honored to be put in charge of this task, and took my role rather seriously (well, as serious as I can be) as the curator of the voices and the collective words and spirit of the play. I also realized early on how systematic I needed to be, with organizing files and with laying the voices. Otherwise, I would have slipped into file madness (it may have happened … I’m not saying).

My aim as editor was to help nurture into place a radio play that we would all be proud of, no matter how large or how small a role that one participated — either as a writer, or a reader, or a commenter, or a voice actor. Cheerleader, too. The radio play will become an important artifact of content (the subject of the play) and collaboration, and the possibilities of true rhizomatic learning, in which so many roots were mingled together to produce something quite beautiful. Which is not to say the process wasn’t messy and a bit of mayhem.

Sill, the many hours I spent in editing mode (and I calculated about six to eight hours of messing with audio) was time well spent. Even the music was original … from the underlying soundtrack (I created it in an app called Musyc) that I hoped would musically represent the diversity of voices and add to the tension of the interaction of characters, to the acoustic version of a song written for Rhizo15 earlier. Terry had a great song earmarked for the ending (an Arabic version of Toy Story and “You’ve Got a Friend in Me”), but I could not figure out if it would violate copyright if we used it, so we abandoned it. As I told Terry, it probably is best, as Michelle Shocked wrote in one of her songs, to make your own jam anyway.

I think we will all be proud of this collaborative endeavor. There is something very powerful in “voice” and not enough of us are doing podcasting to bring that element to the surface. Here, the multitude of voices adds up to something very unique, a weaving of community that is difficult to explain. You have to hear it. You have to listen. You have to imagine. Terry had a great idea that we could not pull off: he wanted all the voice actors to gather together in a Google Hangout, and read the play live on the air, online. Then, we could strip the audio from the video, and use that as a podcast. This is where geography played against us. Too many timezones. It was a great idea that never came to fruition.

Dave Cormier, the facilitator of Rhizomatic Learning, will showcase the premiere of the radio play — A Multitude of Voices: Mr. X Loses His Battle for Objectivity — in his next post and message to the entire #rhizo15 community, probably sometime later today, or very soon (maybe even by the time you read this post). We hope you will sit back, enjoy the multitude of voices, and wonder at the collaboration. If you were one of the many who participated, then I want to extend a warm “thank you” for your contributions. It was lovely to play with our voices and our words.

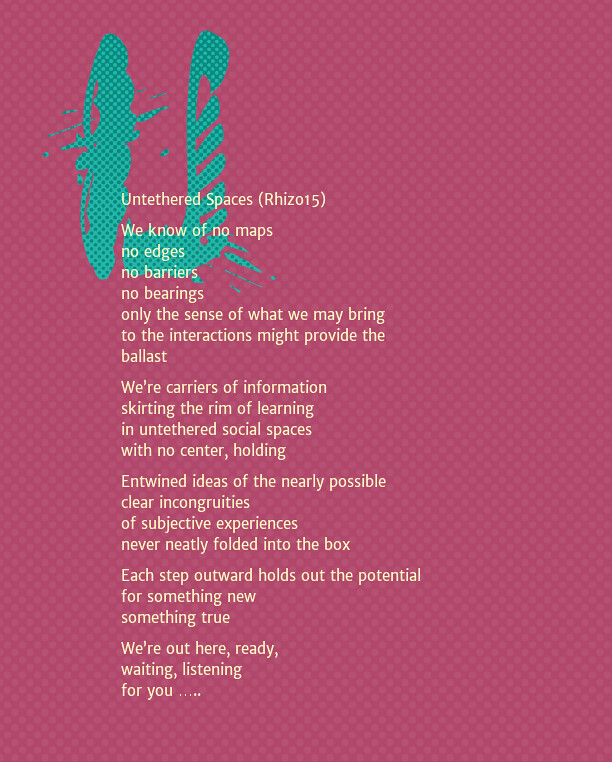

Until the release of the play itself, which I will embed here another day in its full glory, I have sprinkled some promotional media for the play in this blog post. A few of us were having fun making media for the virtual promotional tour in the last few days to spark interest in the play and in the nature of collaboration. Keep an eye on Dave, and …. enjoy the show.

Peace (in the collaboration),

Kevin