(NOTE: This post is a tutorial as part of Write Out, April 2024)

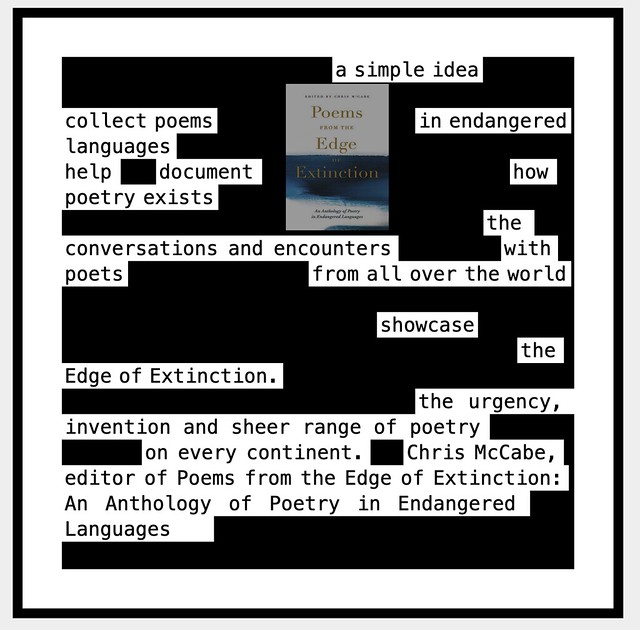

Here is one way to create a blackout/erasure poem, particularly when the Solar Eclipse comes through and the moon “erases” or “blackens out” part of the Sun. Get it?

For mine (above), I used some text generated by ChatGPT in which it explains what a Solar Eclipse is. You may want to find some other text or perhaps the Wendell Berry poem – To Know The Dark — as your main text.

This is what ChatGPT gave me for my activity:

A total solar eclipse occurs when the moon passes directly between the Earth and the sun, obscuring the sun’s entire disk and casting a shadow on Earth’s surface. This extraordinary celestial event unfolds as the moon aligns perfectly with the sun, blocking its light and creating a temporary darkness known as totality within a narrow path on Earth’s surface. During totality, the sun’s corona, the outermost layer of its atmosphere, becomes visible, appearing as a shimmering halo around the obscured sun. Total solar eclipses are rare and captivating phenomena that captivate observers with their awe-inspiring beauty and serve as a reminder of the intricate dance of celestial bodies in our solar system.

I took that text and put it into the Blackout Poetry Maker over at Glitch. It’s a simple site to use. Just add the main text, and then choose the words and phrases that you want to remain on the screen. You can either download the final poe

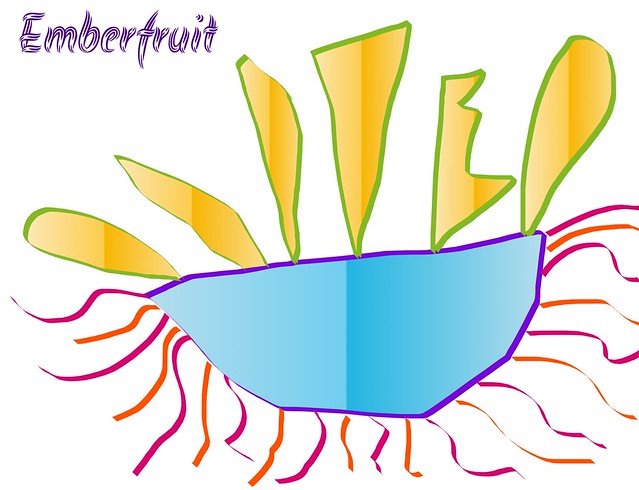

This is what I came up with:

I then went into Flickr’s Public Domain image search to find a Solar Eclipse image to use as a background image. I found one that I liked, a lot.

Solar Eclipse 2017 flickr photo by Jamie Kohns shared into the public domain using Creative Commons Public Domain Dedication (CC0)

Finally, I went into LunaPic — an online image editor — and used its Blender Tool (find it under Effects) to layer my Blackout Poem with the Solar Eclipse image, creating the final project (see above).

Peace (even when the sky goes dark),

Kevin